🏗️☕🤖 The Internal Agent Platform: One Roastery, Many Cafés

Ever feel like you’re drowning in a sea of “point solutions”? One day it’s a Slack bot for checking logs, the next it’s a CLI tool for linting, and by Thursday you’ve got three different “AI assistants” that all give different advice on the same bloody problem.

It’s a proper faff, isn’t it?

In the platform engineering world, we’ve spent years trying to centralise and simplify. We don’t want five ways to deploy a container; we want one golden path or abstract away the complexity. So why are we letting our internal AI tools turn into a fragmented nightmare?

Instead of building 10 different bots, we should be building an Internal Agent Platform.

NOTE: I think I might have gone down a rabbit hole; I started with the intent of building a remote subagent for Gemini CLI and ended up with way more 😄

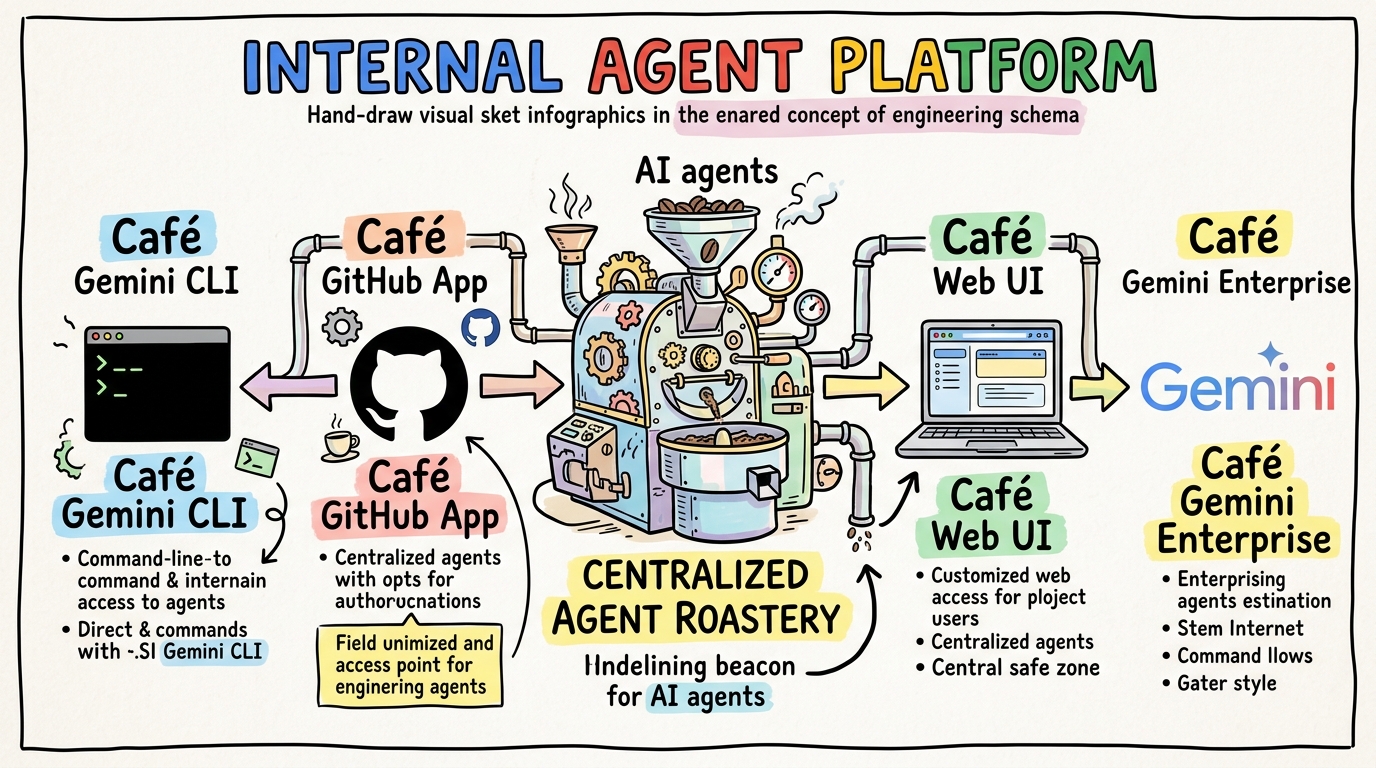

☕ The Roastery vs. The Cafés

Think of it like coffee. You don’t want every tiny café roasting its own beans in a back room with varying levels of quality. You want a central Roastery—a place where the expertise, the quality control, and the “secret sauce” live.

In our world, the Roastery is a centralised Intelligence API.

Now, full disclosure: for my proof of concept, I just knocked together a quick Node.js agent hosted on Google Cloud Run. It has one job: to be the most expert “Security and QA Engineer” in the building. It uses Gemini 3.1 Flash and is packed with specialised system instructions to hunt for bugs and audit code.

But here’s the thing: while I’ve started with a security auditor, the pattern works for anything in the Software Development Life Cycle (SDLC). The possibilities are endless. You could build a roastery for infrastructure-as-code linting, refactoring “sausage-fingered” legacy code, or even hunting down sneaky technical debt. The point isn’t the what—it’s the how.

It’s a great start, but to make it “production ready,” I’m already looking at porting the brain over to the Agent Development Kit (ADK). Using a standardised kit means we can bake in best practices for telemetry, safety, and tool use right out of the box. You can even follow the progress on GitHub Issue #16.

But here’s the clever bit: that single API serves every “Café” in the company.

📡 One Agent, Every Surface

Because we built the intelligence as a standalone service, we can roll it out to wherever our developers are actually working. We don’t ask them to come to us; we send the agent to them.

- The Web Portal: The “Grand Café” management dashboard for seeing the big picture and historical bean supply.

- The GitHub Bot: Our reliable “Drive-Thru” gatekeeper that automatically audits every PR order using webhooks.

- Gemini CLI: A “Quick Espresso Shot” terminal assistant for real-time, ad-hoc audits at your desk.

- Gemini Enterprise: Placed directly into Gemini Enterprise for a proper “Office Brew” integration.

The logic is identical. The persona is consistent. Whether it’s Markdown for us humans or structured JSON for our automated harnesses, the results are reproducible. It’s one roastery, many cafés.

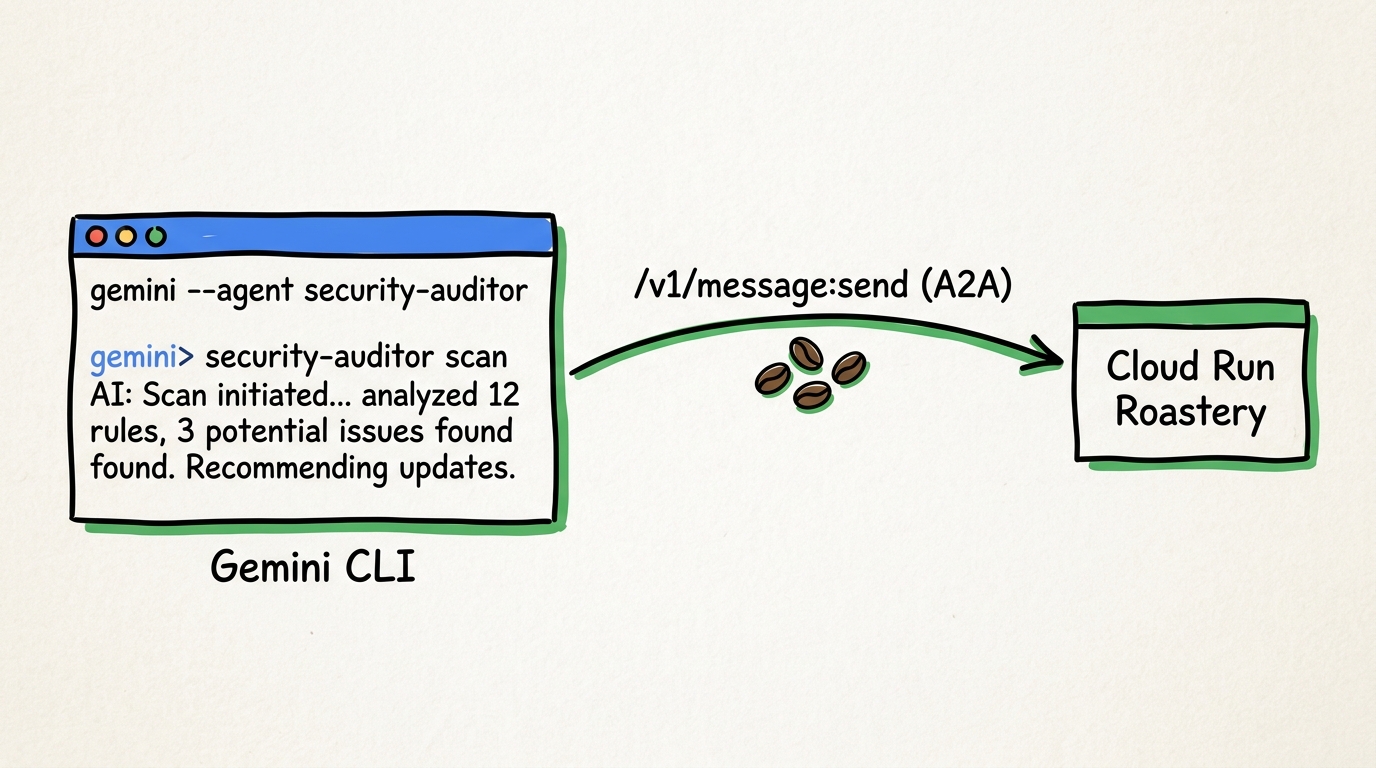

🔌 A2A: The Universal Plug

You might be wondering: “Rob, how do you manage to plug the same agent into all these different harnesses without rewriting it every time?”

The answer is the A2A (Agent-to-Agent) protocol.

By implementing a standardized /v1/message:send endpoint that speaks the

A2A schema (specifically version 0.3.0 in our roastery), our agent

becomes “plug-and-play.” This isn’t just a custom hack; it’s part

of the official

Remote Agents

capability in the Gemini CLI.

When you use the Gemini CLI, it doesn’t just run a local script; it acts as a bridge, securely offloading the heavy lifting to our high-performance Cloud Run service.

The following is an example of the Gemini CLI Subagent definition;

|

|

This “Remote Agent” pattern is a game-changer. It means your local

machine doesn’t need to be a powerhouse, and your platform team

can update the agent’s brain in one place without everyone having

to npm update their CLI.

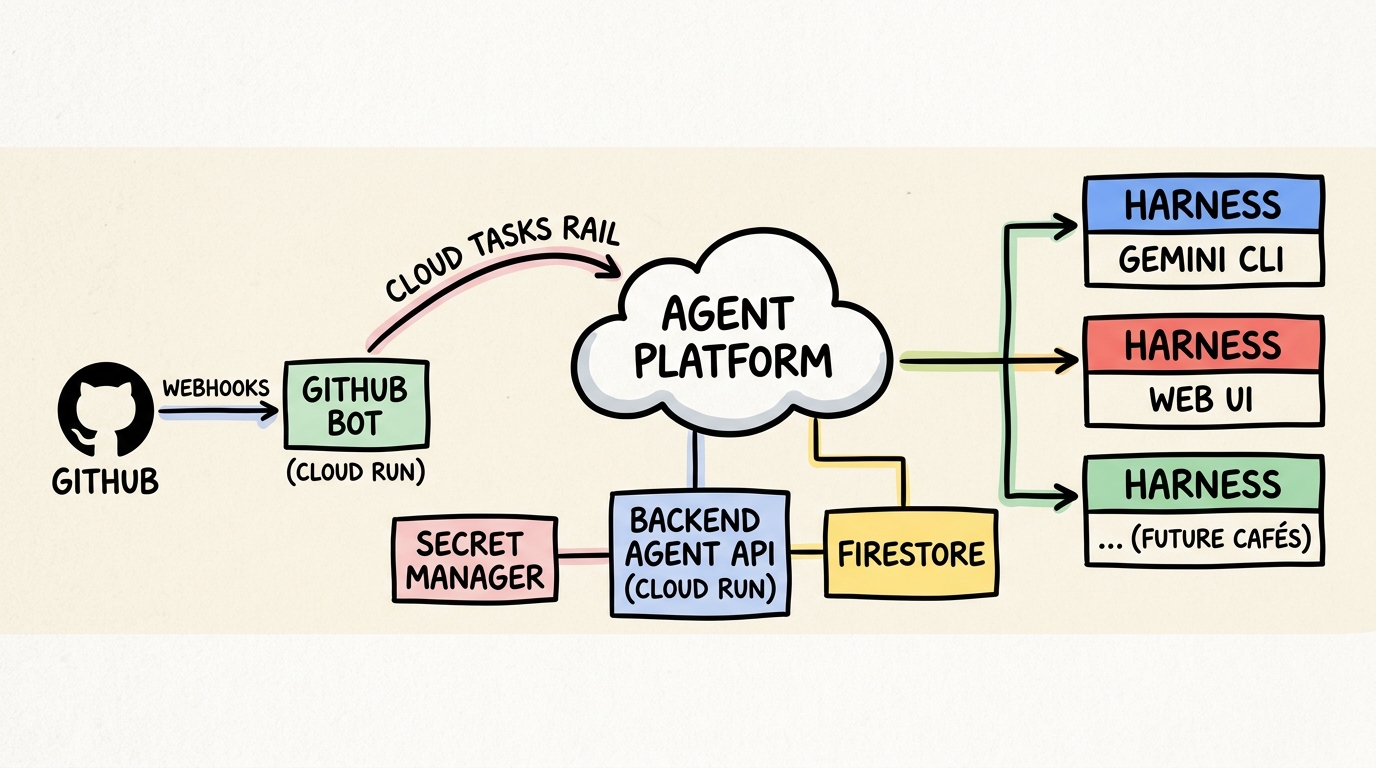

Waiters and Ticket Rails

A professional platform agent is only as good as its harnesses. You can’t just fire-and-forget an AI request and hope for the best, especially when dealing with long-running audits.

We use Google Cloud Tasks as our “ticket rail.” When a GitHub webhook hits our bot, the bot doesn’t start the analysis immediately. It’s just the waiter taking the order. It pops a task onto the rail, acknowledges the webhook, and lets the Roastery process the job reliably in the background.

This decoupling means if the AI takes 30 seconds to “brew” a complex security report, our webhook listener isn’t hanging, and we don’t lose jobs if a service blips.

🧪 The Demo: Brewing it Yourself

If you want to see how this actually looks in code, I’ve put together a proper hands-on demo over at github.com/sapientcoffee/security-agent.

Just a heads-up: it’s a Work in Progress. I’m currently porting the POC logic to the ADK to make it more sturdy, but the core architecture is all there for you to poke around.

Here is how the whole thing is roasted, ground, and served in our demo environment:

Want to see it in action? Check out the Demo Recording on YouTube where I walkthrough the various surfaces and the A2A flow.

The Personas

- The AI Barista (The Agent): The brain in the roastery. Its only job is to be a specialised security and QA expert.

- The Developer: The one in the trenches. They interact with the agent via the CLI for ad-hoc checks or get helpful nudges directly on their PRs.

- The Platform Lead: The one managing the roastery. They use the Web UI to oversee integrations and track audit history across the entire org.

The Workflows

- The Automated Gatekeeper: A developer pushes code, a GitHub webhook fires, and the agent automatically reviews the diff. No manual faff required.

- The Ad-hoc Sniff Test: A developer uses the

gemini clito instantly audit a local file before they even commit. Proper fast feedback. - The Management View: A security lead uses the web dashboard to run a manual audit on a repo URL or review the latest trends in audit findings.

🚀 Platform Engineering

Building an Internal Agent Platform is about more than just security audits. It’s about solving the specific frictions that slow your teams down using a “platform engineering hat.”

I’ve built this roastery on the Google Cloud Agent Platform. By leveraging the existing Google Cloud primitives and building my own value-add components (like the A2A bridge and Cloud Tasks harness), we’ve managed to build a sturdy platform that actually gets the job done without the usual faff.

And with all the new capabilities announced this week at Google Cloud Next, I’m already looking at how to bake in even more enterprise-grade features.

The pattern is the same, whether you’re auditing code, managing infrastructure, or onboarding new starters. Once you have the platform in place, you can roll out new capabilities as fast as you can brew a fresh pot. The possibilities across the SDLC are endless and give us an oppertunity to reimmagine how things are done.

- Identify the Friction: Don’t just “do AI”—solve a problem. (Check out the latest DORA Report and ai.dora.dev for some proper research on finding the right value).

- Build the Roastery: Use a solid base like the Google Cloud Agent Platform.

- Standardise the Plug: Leverage frameworks like ADK and A2A rather than reinventing the wheel. The speed of change in this space is mental; you don’t want to be left behind because you spent months building plumbing that’s already been standardised.

- Engineer the Harnesses: Get that roastery connected. This means making it available in Gemini CLI or Gemini Enterprise, building your own custom web front-end for the specific use case, or plugging it directly into your source control via a GitHub App.

By treating agents as a professional platform service rather than isolated toys, you get consistency, reuse, and a proper good developer experience.

So, what’s the biggest friction in your roastery? Grab a brew, have a think, experiment and see if AI can help (top tip: you don’t have to apply AI to remove all frictions). If the experiment is successful, turn it into an agent that can be used by more people.

Takeaway: The Unified Agent Card

Every great platform agent should be able to describe itself. Here is

how our agent-card.js tells any harness exactly what it can do:

|

|

☕ Wrapping Up

Building an Internal Agent Platform isn’t about chasing the latest AI hype—it’s about applying good old-fashioned platform engineering principles to a new set of tools. By building a centralised Roastery and standardising how we connect it to our development workflows, we’re not just making things “faster”; we’re making them better, safer, and a lot less of a faff.

It’s early days for our security auditor, and porting it to the ADK is going to be a fun challenge. But the foundation is proper sturdy, and I’m chuffed with how it’s coming together. And remember, while we started with security, the possibilities for improving the entire SDLC are truly endless. Whether it’s testing, docs, or infra—if there’s friction, there’s a brew waiting to be roasted.

What about you? Are you building isolated bots, or are you looking at the bigger picture? I’d love to hear how you’re solving frictions in your own roasteries.

Grab a proper brew, drop a comment, and let’s crack on with building something grand. d.